Scammers are attacking not only bank accounts and personal data — advertising budgets, affiliate networks, and marketing funnels are under threat. Fraud has penetrated every digital channel.

While corporate security departments patch holes in infrastructure, attackers have long shifted to a more vulnerable link — digital identity and human psychology. According to Juniper Research, global losses from online fraud will exceed $362 billion by 2026. And it's no longer about how well servers are protected: attacks increasingly look like completely legitimate actions by the users themselves.

Below are five dominant schemes that require a revision of corporate security strategies right now.

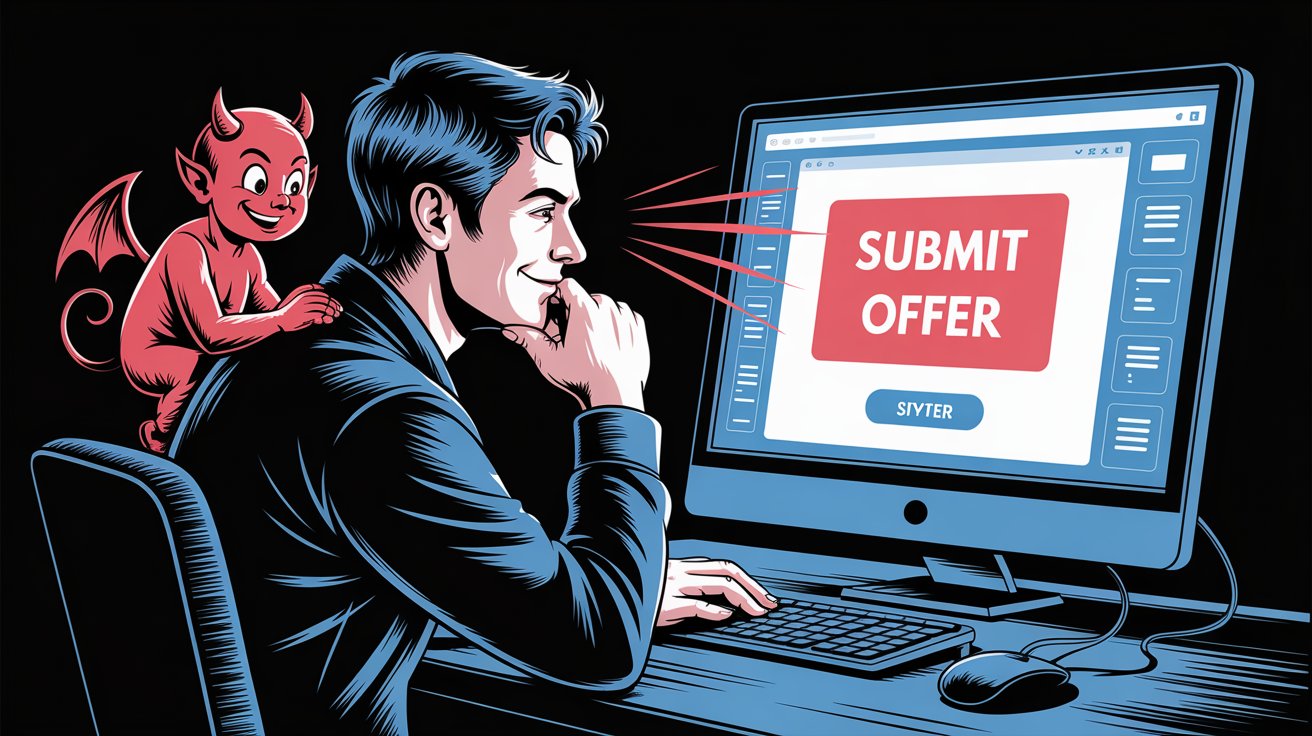

1. APP Fraud: When the Victim Presses "Send" Themselves

Authorized Push Payment (APP) fraud is one of the most insidious types of fraud precisely because everything happens correctly from a technical standpoint. The victim authorizes the transfer themselves: enters the PIN, passes biometrics, confirms the operation in the app. The bank sees a legitimate transaction from a trusted device and does not block it.

The scheme works through pressure and deception: a call from "bank security," a message about suspicious activity, urgency — and the person transfers the money themselves while the scammer keeps them on the line.

Why is it so hard to stop? Disputing such a payment via chargeback is practically impossible: the authorization was voluntary. In the UK, where APP fraud is particularly widespread, losses amounted to over £460 million in the first half of 2024 alone, and the trend continues to grow.

What actually works in 2026: a shift from transaction control to behavioral biometrics. Next-generation systems analyze micro-patterns: atypical swipe speed, unusual time of day, active incoming call during the transfer, hand tremors when entering the amount. If the behavior deviates from the user's norm, the transaction is suspended for additional verification.

2. Synthetic Identities: The Customer Who Doesn't Exist

Imagine the perfect borrower: a clean credit history, a stable address, a real Social Security Number. The bank checks the data — everything matches. One problem: this person does not exist.

Synthetic ID fraud is the creation of "Frankenstein identities." Scammers take a real identifier (passport number, SSN) — often belonging to a child, a pensioner, or a deceased person — and combine it with a fictional name, address, and biography. The result is a synthetic character that begins to live its own "digital life."

The bust-out scheme works in several stages:

- For the first few months, the account behaves exemplarily: small purchases, timely payments, credit score growth.

- After 6–18 months, the "customer" requests the maximum loan or takes out a large installment plan (BNPL).

- Having received the money, they disappear. The account is abandoned, and the debt is written off as bad.

The threat to fintech and retail is particularly high: traditional scoring models partially approve such applications because some of the data is real and present in credit bureau databases. According to Forbes Advisor, synthetic ID fraud costs the US financial industry $20+ billion annually.

Countering this requires verification through multiple independent sources and analysis of the "digital footprint" — online behavior, devices, geolocation — in aggregate, rather than individually.

3. Account Takeover (ATO): Your Personal Account Is No Longer Yours

Account Takeover is not a new threat, but in 2026 it has become a mass phenomenon on an industrial scale. Hackers no longer hack accounts manually: they buy ready-made tools and leak databases on shadow marketplaces.

Two main tools:

- Credential Stuffing — mass substitution of login/password pairs from other services' leaks. People reuse the same passwords: if your password leaked from a hacked forum, a script will automatically check it on 500 other sites in a few hours.

- Phishing-as-a-Service — renting ready-made phishing kits. For $50–200 a month, a scammer gets an exact copy of a bank's or marketplace's interface, a victim management panel, and automated mailing. No technical knowledge required.

The consequences for business go beyond direct financial losses: compromised accounts are used to withdraw bonus points, steal linked cards, make fraudulent refunds, and launder money. Reputational damage and customer churn often cost more than the theft itself.

4. AI in the Hands of Scammers: The Democratization of Cybercrime

Generative AI has made professional fraud accessible to amateurs — and this is perhaps the main trend of 2026.

- Flawless phishing. Previously, scam emails were given away by typos and awkward phrasing. Today, LLM models generate flawless texts in any language, with the right tone, personalization for a specific recipient, and the target company's corporate style.

- Real-time deepfakes. Many verification services use liveness checks: "turn your head," "blink," "say a word." In 2025–2026, neural networks learned to bypass these checks by superimposing a synthetic video image over the real camera stream. The KYC procedure is successful — but there is no one behind the screen.

- Automated attacks. AI agent-based bots test security systems, select bypass methods, and scale attacks without human intervention.

What this means for business

Protecting corporate infrastructure in 2026 is not about point measures, but a multi-layered strategy where each level complements the other:

- Behavioral analytics instead of static rules — systems that know each user's "normal" pattern.

- ML detection models at the onboarding stage — anomalies are easier to catch during registration than after the first transaction.

- Cross-channel monitoring — fraud often starts in one channel and is executed in another.

- Updating KYC procedures considering deepfake threats: live interaction and documentary verification through independent sources.

Scammers adapt quickly. The only way to stay one step ahead is to build security systems that learn alongside the threat, rather than reacting to it after the fact.

5. AdTech Fraud: Invisible Ad Budget Losses

While businesses protect payment data and customer accounts, advertising budgets leak through another hole — and often unnoticed. According to Juniper Research, industry losses from ad fraud will exceed $172 billion in 2026. Money is deducted, reports look great, but there are no real customers behind this traffic.

AdTech fraud is an ecosystem of schemes aimed at imitating legitimate ad activity: clicks, impressions, installs, conversions. Let's look at the key ones.

Click Fraud and Bot-clicking

The most common scheme. Bots or farms of real devices click on ads, simulating audience interest. The advertiser pays for every click — and pays for nothing.

Modern botnets can reproduce human behavior: random pauses, page scrolling, mouse movement. Simple IP or User-Agent checks no longer work. They can only be detected through the analysis of behavioral patterns at the session level — time on site, scroll depth, click heatmaps.

SDK Spoofing and Fake Installs (Mobile Ad Fraud)

A critical threat to mobile marketing. Scammers intercept legitimate install signals from real apps and use them to "attribute" conversions to themselves that occurred organically or through another channel.

The advertiser sees excellent CPI campaign metrics, pays the commission — but the traffic was bought from another source or came on its own. E-commerce and gaming apps with high install payouts are particularly vulnerable.

Domain Spoofing

A scammer sells ad impressions, passing off cheap traffic from low-quality sites as premium publisher inventory. The bid metadata might indicate forbes.com, for example, but the ad is actually shown on an anonymous site with a inflated audience.

This hits two parameters at once: the brand is placed in an undesirable context, and it pays premium media rates.

Affiliate Fraud

Unscrupulous partners imitate target actions: applications, registrations, leads. Technically, the form is filled out, the pixel fires, the CRM receives a record — but there is no real person behind it intending to buy. This is especially relevant for financial offers and e-commerce with cost-per-lead models.

Why AdTech Fraud is Harder to Catch than Financial Fraud

Financial fraud leaves a trace in transactions — it can be disputed or tracked. Ad fraud disguises itself as normal metrics: CTR within range, Geography in the right region, devices look like real smartphones. Without specialized traffic analysis at the individual session level, fingerprint signals, and cross-campaign patterns, the losses remain invisible.

That is why detecting ad fraud requires specialized tooling, not just an add-on to standard antifraud systems.

«AdTech fraud requires special attention: it is systematically underestimated in corporate risk assessments, although it is precisely here that losses are often the most predictable and preventable — provided the right detection tools are in place».

Intelligent Protection of Your Online Advertising with ClikBy

The ClikBy platform integrates into your ad infrastructure as an independent security layer — alongside your pixels, not instead of them. Pixels continue to collect data for analytics and optimization. Meanwhile, ClikBy analyzes over 130 signals for every click in real-time and blocks fraudulent traffic before the budget is deducted.

Additionally, the system cleans your retargeting audiences of bots — so that Facebook and Google algorithms learn only from real users. This improves the quality of lookalike audiences and the efficiency of automated strategies.

- Ensemble Machine Learning: we combine 5+ ML models to recognize synthetic identities.

- Zero-Trust Attribution: we verify every click, preventing bots from hijacking organic traffic.

- Adaptive Thresholds: the system automatically lowers filter strictness during sales periods, minimizing False Positives.

Read more about the mechanics of ad fraud in our article: how to recognize click fraud in your ads.

Book antifraud for your ads (Yandex.Direct, Google Ads, Meta Ads, and other platforms)